Errors occur every day in healthcare institutions and research facilities. Medical lab errors can be very costly, setting hospitals back hundreds (sometimes thousands) of dollars for every mislabeled sample, causing irreparable harm to the physical and mental health of the patient. Errors in the research lab also have a broad impact, skewing results, and wasting precious materials—which are often irreplaceable—and years of effort.

Errors occur every day in healthcare institutions and research facilities. Medical lab errors can be very costly, setting hospitals back hundreds (sometimes thousands) of dollars for every mislabeled sample, causing irreparable harm to the physical and mental health of the patient. Errors in the research lab also have a broad impact, skewing results, and wasting precious materials—which are often irreplaceable—and years of effort.

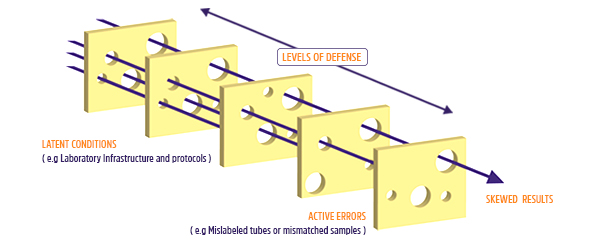

The Swiss Cheese Model

In medicine, there are two ways of dealing with errors. One approach, termed the “bad apple” approach, involves placing the blame squarely on the individual who made the error.1 This approach assumes that people won’t ever make an error; therefore, anyone who does is reprimanded or removed from their position. This also happens frequently in research, where the blame for mislabeled samples rests solely on the graduate student or laboratory staff performing the experiment. In hospitals, this type of thinking has been replaced by a “systems” approach, where errors are considered consequences—not causes—of policies and protocols that may eventually lead to lapses or mistakes. Human error is expected in varying degrees and staff are trained to catch mistakes and recover from them faster.2 Here, an open environment is key, where people are free to discuss errors. This type of mentality avoids possible cover-ups because of people trying to dodge blame; that way, strategies can be implemented to prevent and manage errors in the future.1

One of the most renowned systems that deals with error is called the “Swiss Cheese” model, originally devised by James T. Reason and Dante Orlandella at the University of Manchester. The model is based on barriers, each representing a layer of swiss cheese with many holes. When layered on top of one another, the holes, which are where errors can happen, eventually become blocked, preventing lapses and mistakes from creeping through. However, when errors occur due to the medical staff (termed an “active failure” in the model) or caused by inherent flaws in protocols or procedures (termed “latent conditions”) in such a way that they all line up through each barrier, then you have an opportunity for a potential disastrous patient outcome.2

Employing the Swiss Cheese Model in the Lab

In the lab, similar barriers can be implemented to mitigate errors. Labeling samples is one of the cheapest and easiest ways to avoid mistakes; without physically labeling your tubes, you’d never remember the identity of your samples, especially when dealing with hundreds of them at a time. Using the right label for the right application (e.g. using cryogenic labels for samples that will be stored in liquid nitrogen) is necessary to ensure your labels don’t fail because of the environment. Barcodes are another means of preventing errors by better tracking your samples and reagents as they are processed. Implementing a laboratory information management system (LIMS) is another efficient way to organize your workflow and improve the efficiency of your lab. As samples are handled from one stage to the next, you can scan your samples and your LIMS will record each specimen, making sure the appropriate samples are at the correct step of the protocol. It’s also important to monitor your laboratory operations, including the temperature, humidity, air pressure, CO2, and O2 levels of your lab, freezers, refrigerators, and incubators, as well as backup generators and HVAC. Systems like XiltriX™ can continuously monitor your vital equipment, leveraging predictive analytics for preventative action, and predictive maintenance before you lose any valuable assets.

Common Reasons Why People Make Errors

Not all errors can be diagnosed in the same way. The key is to understand why mistakes occur in the first place and to design solutions that can reduce the probability of error in the future, as well as to detect and correct errors if they do happen.3 Using medical errors as an example, some of the most common reasons include tiredness, nutritional deprivation or dehydration, and emotional stress, all of which can cause fatigue in one form or another and lead to lapses in judgment and performance.4 Researchers also go through similar situations, though not to the same extent as doctors or nurses. You can imagine a situation where a medical laboratory technician is swamped with specimens to analyze, or a newly-hired principal investigator is nearing the deadline to submit a grant and needs to finish up some final experiments. Some procedures are very monotonous (in the lab, think repeated pipetting or labeling) or overly familiar, resulting in the healthcare professional or researcher losing awareness of the task at hand and slipping up.5

Attitude also plays a role in determining how effectively errors are managed. Several types of demeanors are often associated with impaired judgment, making it more likely that an error will occur. Often, staff can be impulsive, anti-authoritative (don’t tell me what to do!), feel as if lapses won’t ever happen to them, act too eagerly to lead when they shouldn’t be the ones to take charge, or resign themselves to thinking that their actions—both successes and failures—don’t matter (oh, the life of a Ph.D. student!).6

With all that said, it’s important to know what measures ought to be in place to prevent errors from doing any damage. We’ve previously covered some of the strategies that hospitals can use to reduce the likelihood of mistakes, but with so much that can go wrong, no one solution can ever be applied as a quick fix. It’s up to everyone at an organization to look out for one another and ensure the highest quality of medical care and scientific advancement.

LabTAG by GA International is a leading manufacturer of high-performance specialty labels and a supplier of identification solutions used in research and medical labs as well as healthcare institutions.

References:

- Kennedy D. Analysis of sharp-end, frontline human error: Beyond throwing out “bad apples.” J Nurs Care Qual. 2004;10(2):116-122.

- Reason J. Human error: Models and management. West J Med. 2000;320(7237):768-770.

- Etchells E, Juurlink D, Levinson W. Medication errors: The human factor. CMAJ. 2008;178(1):63-64.

- Brennan PA, Mitchell DA, Holmes S, Plint S, Parry D. Good people who try their best can have problems: Recognition of human factors and how to minimise error. Br J Oral Maxillofac Surg. 2016;54:3-7.

- De Los Santos MJ, Ruiz A. Protocols for tracking and witnessing samples and patients in assisted reproductive technology. Fertil Steril. 2013. doi:10.1016/j.fertnstert.2013.09.029

- Renouard F, Perrault-Pierre E. [Is human behavior the leading cause of complications in medical practice?]. Orthod Fr. 2016;87:3-11.