Artificial intelligence (AI) has been a popular topic ever since it was introduced in 1956 by John McCarthy. It quickly captured the imagination of Hollywood, leading to many blockbuster movies being made using AI as a plot device, including the Terminator franchise. However, until now AI has remained as only science fiction, as it’s only recently that computers have become powerful enough to integrate AI into something appreciably functional, allowing some of the top companies in the world, such as Google, IBM, and Apple, to design systems that learn on their own. Gartner, a research and advisory company that publishes a yearly list of the most hyped technologies (termed the Gartner Hype Cycle), has placed AI-associated technologies at the top of their list.1 With companies like PathAI, Freenome, and Benevolent AI all entering the market, it hasn’t taken long for scientists to adopt Artificial intelligence in the clinic, to solve complex biological and medical problems.

Artificial intelligence (AI) has been a popular topic ever since it was introduced in 1956 by John McCarthy. It quickly captured the imagination of Hollywood, leading to many blockbuster movies being made using AI as a plot device, including the Terminator franchise. However, until now AI has remained as only science fiction, as it’s only recently that computers have become powerful enough to integrate AI into something appreciably functional, allowing some of the top companies in the world, such as Google, IBM, and Apple, to design systems that learn on their own. Gartner, a research and advisory company that publishes a yearly list of the most hyped technologies (termed the Gartner Hype Cycle), has placed AI-associated technologies at the top of their list.1 With companies like PathAI, Freenome, and Benevolent AI all entering the market, it hasn’t taken long for scientists to adopt Artificial intelligence in the clinic, to solve complex biological and medical problems.

So, how does AI work exactly?

The field of computer science considers AI as intelligent devices that can perceive their environment to maximize their chance of success towards reaching a goal. There are two types of AI: machine learning and artificial neural networks (ANN). Machine learning involves improving performance based on learning from pre-specified inputs. For example, a user enters into a computer a database of images of a certain plant species and provides the AI with a “training data set” that details particular features that are characteristic of that species. The AI can then be shown a new set of plant pictures to assess whether they are from that species or not. Based on its response, combined with user feedback, it will adapt to become more accurate as the amount of data and feedback it encounters increases. However, this type of AI doesn’t do well when the data sets become extremely large as it depends on the user to convert the data into a usable form prior to AI analysis.1

In contrast to machine learning, ANNs process information organically without requiring user input. It assigns a logical structure to the information that it’s presented, acting similarly to our own brains as it parses out relevant details needed to achieve its goal with maximal efficiency. It works by storing and processing information in layers, receiving more and more processed, complex information as it moves up each layer. It’s only recently that computers have been able to provide enough power to create more layers, with some developers making AI as deep as 100 layers, thereby designing machines that can utilize “deep” learning.1

How efficient is AI at learning complex systems?

Google has already shown what its ANN software can accomplish. Its DeepMind Deep Q-Learning software was able to play Atari’s Breakout video game with no other commands except to earn the highest score. Learning the concept of game mechanics on its own, it was eventually able to play the game at an extremely high level. Google upped its performance with AlphaGo, a program that learned how to play the complex strategy game, Go.1 Within 40 days, it became the best Go player of all time without human help.2

AI has also been tested in the clinical setting to classify and detect cancer. One group assessed the accuracy of an ANN to correctly identify samples that were either cancerous skin lesions or benign tumors. In their test, the ANN performed better than 21 board-certified dermatologists. Another test run at the 2017 IEEE International Symposium on Biomedical Imaging (ISBI) found that the best algorithms for detecting lymph node metastases in women with breast cancer were deep-learning computer models, significantly outperforming pathologists when time constraints were applied to the detection process.2

New applications of AI for medical diagnostics

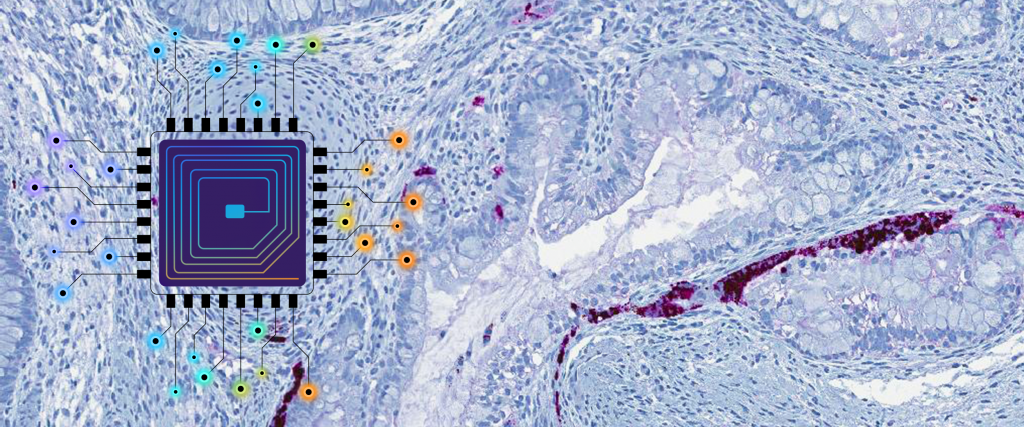

AI has previously been integrated into microscopy image formation and analysis in pathology labs and as an aid in radiology for image reconstruction and data interpretation.3 A few recent highlights of the newly engineered technology show the diverse applications of AI. In August 2018, Yasui et al published a report detailing the use of AI to automate single-molecule imaging of fluorescently-tagged receptors. The authors employed AI to automate immersion-oil feeding, focusing, and cell searching, designing a system that can quickly and easily acquire single-molecule images regardless of the fluorescent tag.4 Recently, Daniel Merk and his colleagues from the University of Milano-Bicocca in Milan, Italy, developed an AI-based program that could design novel drugs using natural product–inspired chemical leads,5 while Jörn Lötsch and his group in Germany discovered a novel lipid profile for multiple sclerosis using an AI-based program that could detect the presence of the disease in patient samples with 96% accuracy, raising the possibility of making it easier to diagnose the disease.6

Though some are concerned that the proliferation of AI-based technology has the capacity to destroy humankind, it may also significantly improve the quality of healthcare and biomedical research. AI-based software may speed up the pace of medical analysis, making it easier for patients to obtain a diagnosis and treatment options while preventing human-related medical errors.7 So while the day may come when the Matrix is no longer artificial intelligence fiction, for now, advances in computing power mean that we can utilize the best aspects of AI to help society instead.

LabTAG by GA International is a leading manufacturer of high-performance specialty labels and a supplier of identification solutions used in research and medical labs as well as healthcare institutions.

References:

- Bini SA. Artificial Intelligence, Machine Learning, Deep Learning, and Cognitive Computing: What Do These Terms Mean and How Will They Impact Health Care? J Arthroplasty. 2018:1-11.

- Nichols JA, Herbert Chan HW, Baker MAB. Machine learning: applications of artificial intelligence to imaging and diagnosis. Biophys Rev. 2018:1-8.

- Mandal S, Greenblatt AB, An J. Imaging Intelligence: AI Is Transforming Medical Imaging Across the Imaging Spectrum. IEEE Pulse. 2018;9(5):16-24.

- Yasui M, Hiroshima M, Kozuka J, Sako Y, Ueda M. Automated single-molecule imaging in living cells. Nat Commun. 2018;9(1):1-11.

- Merk D, Grisoni F, Friedrich L, Gelzinyte E, Schneider G. Computer-Assisted Discovery of Retinoid X Receptor Modulating Natural Products and Isofunctional Mimetics. J Med Chem. 2018;61(12):5442-5447.

- Lötsch J, Schiffmann S, Schmitz K, et al. Machine-learning based lipid mediator serum concentration patterns allow identification of multiple sclerosis patients with high accuracy OPEN. Sci Reports |. 2018;8(1):1-16.

- Jiang F, Jiang Y, Zhi H, et al. Artificial intelligence in healthcare: Past, present and future. Stroke Vasc Neurol. 2017;2(4):230-243.

I like the helpful info you provide in your articles. I’ll bookmark your blog and check again here frequently.

I’m quite sure I will learn lots of new stuff right here!

Good luck for the next!